How Has the Transformer Architecture Fundamentally Changed the Paradigm of Biomedical Research?

Introduction

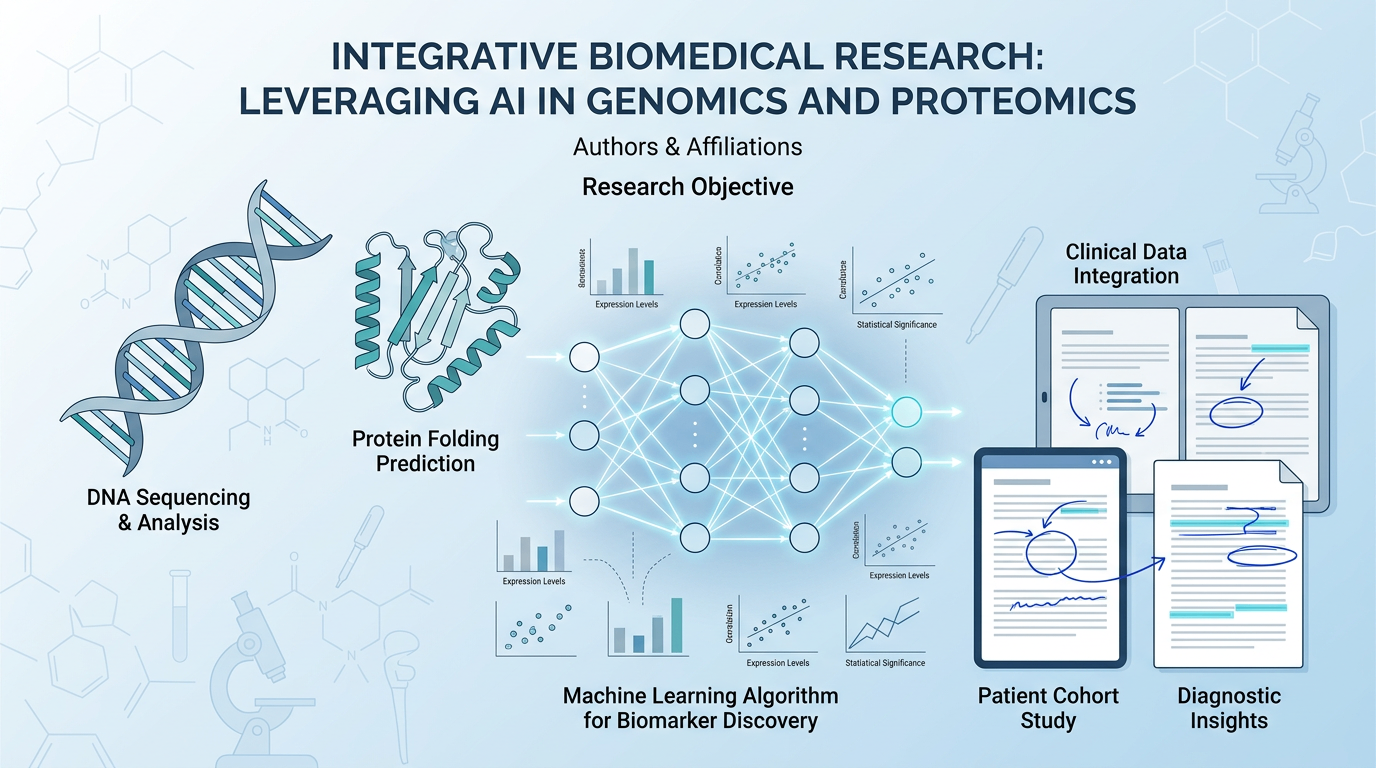

The Transformer architecture has become one of the most influential methods in modern AI, and its impact on biomedical research is growing fast. For medical students, clinicians, and researchers, the challenge is clear: biomedical data is abundant, but it is fragmented, high-dimensional, and difficult to interpret. The Transformer architecture offers a way to learn from sequences, language, images, and molecular signals at scale. It is changing how we discover biomarkers, analyze literature, and design therapeutic tools.

1. Why the Transformer Architecture Matters in Biomedicine

1.1 From fixed rules to data-driven learning

Traditional biomedical analysis often depends on manual feature engineering, rule-based workflows, or narrow statistical models. These methods work well in defined settings, but they struggle with scale and complexity.

The Transformer architecture changes this by using attention mechanisms to identify which parts of a sequence matter most. In practice, this means a model can analyze long texts, gene sequences, or protein chains while preserving context. That is a major advantage in biomedicine, where meaning often depends on relationships across long distances.

1.2 Why biomedical data fits the Transformer architecture

Biomedical research generates multiple data types:

- Clinical narratives

- Electronic health records

- Genomic sequences

- Protein sequences

- Medical images

- Scientific literature

These datasets are large, noisy, and interdependent. The Transformer architecture is especially useful because it can model relationships across these inputs more effectively than many older approaches. It can learn patterns in language, structure, and biology without needing the same level of hand-crafted features.

1.3 A shift in research workflow

The practical change is not only technical. It is methodological. The Transformer architecture is helping researchers move from narrow task-by-task analysis to integrated knowledge extraction.

This matters because biomedical problems are rarely isolated. A biomarker may appear in literature, in sequencing data, and in clinical records at the same time. A model that can link those signals can shorten discovery cycles and improve hypothesis generation.

2. How the Transformer Architecture Is Used Across Biomedical Research

2.1 Natural language processing for clinical and scientific text

One of the earliest and most visible uses of the Transformer architecture in biomedicine is biomedical NLP. Models based on this architecture can extract entities, classify documents, summarize papers, and identify relations in clinical notes.

This is especially valuable in settings where information is written in complex medical language. For example, a model can help identify adverse events, disease mentions, treatment responses, or eligibility criteria from unstructured text. That reduces manual review time and supports more consistent analysis.

2.2 Genomics and protein sequence analysis

Biological sequences are also well suited to the Transformer architecture. DNA, RNA, and proteins contain long-range dependencies. A change in one region may affect function far away in the sequence.

Transformer-based models can capture these dependencies better than many local-window methods. In protein science, this has supported tasks such as:

- Function prediction

- Structure-related representation learning

- Interaction modeling

- Variant effect analysis

In genomics, the architecture can help learn regulatory patterns and sequence context that may be difficult to capture with simpler models.

2.3 Medical imaging and multimodal diagnosis

Although Transformers were first popularized in language tasks, they are now widely used in imaging. In biomedical imaging, they can help analyze radiology scans, pathology slides, and other visual data.

The advantage is not only image recognition. The Transformer architecture can also integrate multimodal inputs, such as an image plus a report plus patient history. That is closer to how clinicians actually reason. It supports a more complete interpretation of the case.

3. What Has Changed in Biomedical Discovery

3.1 Faster literature review and evidence synthesis

Biomedical literature grows too quickly for manual reading alone. Thousands of papers are published every day across medicine and life sciences. The Transformer architecture helps automate literature triage, retrieval, and summarization.

That does not replace expert judgment. But it can help researchers identify relevant studies faster, map relationships between genes and diseases, and track emerging evidence. For busy clinicians and scientists, that saves time and reduces missed signals.

3.2 Better biomarker and drug discovery pipelines

Drug discovery and biomarker research involve searching for weak signals in large datasets. The Transformer architecture supports this by learning complex patterns across molecular, experimental, and textual data.

In practical terms, it can improve:

- Candidate ranking.

- Relation extraction from publications.

- Pattern recognition in omics data.

- Cross-modal integration for target prioritization.

This can help researchers narrow the search space before expensive wet-lab validation. That is where the architecture becomes strategically important. It does not eliminate experimentation. It makes experimentation more targeted.

3.3 More scalable hypothesis generation

A major limitation in biomedical science is human bandwidth. Researchers cannot manually inspect every possible relationship in huge datasets. The Transformer architecture helps generate testable hypotheses by surfacing non-obvious associations.

This is especially useful in fields such as oncology, immunology, and rare disease research, where hidden patterns may matter. When models are used carefully, they can suggest promising directions for further study.

4. Why the Transformer Architecture Is Not Just Another Model

4.1 Attention changes how context is learned

The key innovation of the Transformer architecture is attention. Instead of processing information in a strictly sequential way, it allows the model to compare all elements in a sequence and assign relevance dynamically.

This is important in biomedicine because context often determines meaning. A symptom, mutation, or biomarker may only make sense when viewed alongside other data points. Attention makes that relational reasoning more effective.

4.2 Scalability supports large biomedical datasets

The Transformer architecture also scales well with data and compute. As biomedical datasets continue to expand, this becomes a practical advantage. Larger training corpora can improve representation quality and transferability across tasks.

For researchers, that means a model trained on broad biomedical corpora can often be adapted to narrower tasks with less labeled data. In resource-limited settings, that can be especially valuable.

4.3 Transfer learning lowers the barrier to adoption

Another reason the Transformer architecture has changed the field is transfer learning. A model pre-trained on large amounts of data can be fine-tuned for specific biomedical tasks.

This reduces the need to train from scratch. It also helps teams with limited datasets or limited annotation budgets. In practice, that can make advanced AI more accessible to hospitals, labs, and academic groups.

5. Practical Limitations and Research Cautions

5.1 Data quality still matters

The Transformer architecture is powerful, but it does not solve poor data quality. If input data are biased, incomplete, or inconsistently labeled, the output will reflect those problems.

Biomedical teams should still prioritize:

- Clean annotation standards

- Careful validation

- External testing

- Human expert review

5.2 Interpretability remains a challenge

Attention is not the same as full explanation. Even when a model highlights important tokens or regions, that does not automatically prove causal reasoning. In biomedical research, this distinction matters.

Clinicians and researchers should use the Transformer architecture as decision support, not as a substitute for scientific validation. The safest workflow combines model output with expert oversight and experimental confirmation.

5.3 Regulatory and ethical issues cannot be ignored

Biomedical AI must be evaluated for privacy, fairness, and generalizability. Models trained on one population may not perform equally well in another. Data governance is essential, especially when handling patient records.

This is why deployment in medicine requires more than technical performance. It needs documentation, auditing, and accountability.

6. How Scientists Can Use This Shift in Practice

6.1 A simple implementation framework

For teams exploring the Transformer architecture, a practical workflow looks like this:

- Define one high-value biomedical task.

- Select the data source and quality criteria.

- Choose a pre-trained model if available.

- Fine-tune and validate on held-out data.

- Compare results with existing baselines.

- Review outputs with domain experts.

- Document limitations and error cases.

This structure keeps the project focused and easier to evaluate.

6.2 Where productivity tools can help

Not every research team has the time to build custom AI pipelines. That is where specialized productivity platforms become useful. Tools such as scifocus.ai can help researchers streamline literature work, organize scientific information, and support more efficient research writing.

For medical students, physicians, and scientists, this can reduce repetitive effort and improve focus on analysis, interpretation, and decision-making. In a field where time and accuracy both matter, that operational support is valuable.

Conclusion

The Transformer architecture has fundamentally changed biomedical research by making it possible to learn from long-range dependencies, integrate multimodal data, and accelerate knowledge discovery. It has improved text mining, genomic analysis, imaging workflows, and hypothesis generation. But its value is greatest when paired with rigorous validation, expert oversight, and clean data.

For teams that want to work faster without sacrificing scientific quality, tools like scifocus.ai can help bridge the gap between AI capability and day-to-day research execution. If your goal is to read faster, synthesize better, and manage biomedical research more efficiently, this is a practical next step.

Did you like this article? Explore a few more related posts.

Start Your Research Journey With Scifocus Today

Create your free Scifocus account today and take your research to the next level. Experience the difference firsthand—your journey to academic excellence starts here.